(🧵1/11) For the past year and a half, I've been investigating OpenAI and Sam Altman for @NewYorker. With my coauthor @andrewmarantz, I reviewed never-before-disclosed internal memos, obtained 200+ pages of documents related to a close colleague, including extensive private notes, and interviewed more than 100 people.

OpenAI was founded on the premise that A.I. could be the most dangerous invention in human history—and that its C.E.O. would need to be a person of uncommon integrity. We lay out the most detailed account yet of why Altman was ousted out by board members and executives who came to believe he lacked that integrity, and ask: were they right to allege that he couldn't be trusted?

A thread on some of of our findings:

(2/11) In the fall of 2023, OpenAI's chief scientist, Ilya Sutskever, acting at the behest of fellow board members and with other concerned colleagues, compiled some 70 pages of memos about Altman and his second-in-command, Greg Brockman—Slack messages and H.R. documents, some photographed on a cellphone to avoid detection on company devices. One memo begins with a list: "Sam exhibits a consistent pattern of . . ." The first item is "Lying."

Separately, Dario Amodei—who left to co-found Anthropic—kept years of private notes on Altman and Brockman. More than 200 pages of related documents, never before publicly disclosed, have circulated in Silicon Valley. In one document, Amodei writes that Altman's “words were almost certainly bullshit.”

(3/11) The colleagues who facilitated his ouster accuse him of a degree of deception that is untenable for any executive and dangerous for a leader of such a transformative technology. Mira Murati, who had given Sutskever material for his memos, said: “We need institutions worthy of the power they wield…The board sought feedback, and I shared what I was seeing. Everything I shared was accurate, and I stand behind all of it."

Opinions vary on the extent to which we should consider these traits benign or malign. Altman attributes the criticism to a tendency, especially early in his career, “to be too much of a conflict avoider."

(4/11) What does this trait look like in practice?

In late 2022, Altman assured the board that features in a forthcoming model had been approved by a safety panel. Board member Helen Toner requested documentation. She found that the most controversial features had not, in fact, been approved.

In 2023, as the company was preparing to release GPT-4 Turbo, Altman apparently told Murati that the model didn't need safety approval, citing the company's general counsel, Jason Kwon. But Kwon said he was "confused" about where Altman had gotten that idea.

(5/11) OpenAI increasingly has meaningful power to shape global security.

The piece describes in detail how its executives considered enriching the company by playing world powers—including China and Russia—against one another, perhaps starting a bidding war for advanced A.I. technology. (The plan was dropped after several employees talked about quitting. An OpenAI representative said it was just one of many ideas "batted around at a high level.")

(6/11) A legal review that was an integral part if Altman's return. The review, by the law firm WilmerHale, was overseen by two board members selected in close conversation with Altman. People close to the investigation told us that no written report was ever produced—though many executives expected one, given the high profile nature of the scandal. Only an 800 word announcement from OpenAI was released, acknowledging a "breakdown in trust."

Some of the lawyers involved defended their work as "an independent, careful, comprehensive review" and one of the new board members said there was "no need for a formal written report." Many others disagreed:

(7/11) OpenAI was established as a nonprofit, whose board had a duty to prioritize the safety of humanity over the company’s success, or even its survival. The company accepted charitable donations, and some former employees told us they joined because of assurances about the nonprofit and its noble mission, even taking pay cuts to do so.

But internal records show that the founders had private doubts about the nonprofit structure as early as 2017. Brockman, Altman's co-founder, wrote in a diary entry: "cannot say that we are committed to the non-profit . . . if three months later we're doing b-corp then it was a lie."

OpenAI has since recapitalized as a for-profit entity.

(8/11) Some former OpenAI researchers argue that the company has forfeited its original safety mission and accelerated an industry-wide race to the bottom.

The piece details a set of public and internal safety commitments that former researchers say were abandoned. Several safety-related teams at the company have been dissolved. The Future of Life Institute recently gave OpenAI an F on existential safety—alongside every other company, except for Anthropic, which got a D, and Google DeepMind, which got a D-.

Altman told us he still prioritizes safety, and that "we still will run safety projects, or at least safety-adjacent projects.”

(9/11) In the cut-throat race for A.I. dominance, these more substantive critiques of Altman commingle with no-holds-barred opposition efforts in which rivals have weaponized his personal life. Intermediaries directly connected to—and in at least one case compensated by—Elon Musk have circulated dozens of pages of salacious and unsubstantiated opposition research reflecting extensive surveillance: shell companies, personal contacts, interviews about a purported sex worker conducted at gay bars.

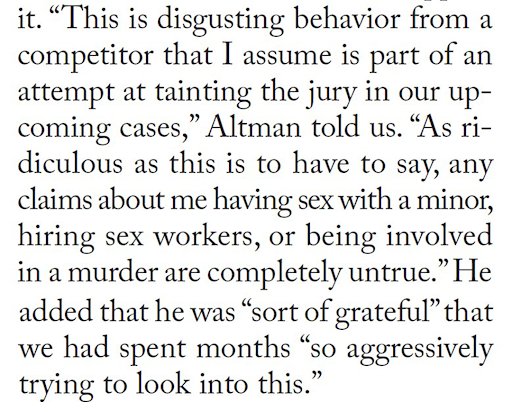

In the course of our reporting, multiple people within rival companies reached out to insinuate to us that Altman sexually pursues minors, a narrative persistent in Silicon Valley which appears to be untrue. We spent months looking into the matter and could find no evidence to support it.

(10/11) Why does all of this matter?

A.I. does already have life-saving applications, from medical research to weather warnings. Altman has supported OpenAI's growth with promises of a superabundant future.

But the dangers are also no longer a fantasy. A.I. is already being deployed in military operations around the world. Researchers have documented its power to rapidly identify chemical warfare agents. OpenAI faces seven wrongful-death lawsuits alleging ChatGPT prompted suicides and a murder. A.I. could soon cause severe labor disruption, perhaps eliminating millions of jobs. The U.S. economy is increasingly dependent on a few highly leveraged A.I. companies and some experts warn of a bubble and recession risks.

OpenAI has one of the fastest cash burns of any startup in history, relying on partners that have borrowed vast sums. A board member told us, “The company levered up financially in a way that’s risky and scary right now.” (OpenAI disputes this.)

If the bubble pops, much more than one company is at stake.

(11/11) There is much more in the piece—on the saga of Altman's firing and return; a history of alleged similar complaints earlier in his career; gifts from foreign leaders and a security-clearance vetting process that turned up what one official described as "a lot of red flags," and more. And it looks at wider critiques from industry insiders of the current moment's anti-regulation trajectory—something that stands to affect all of us.

I hope you take the time for a long-read in this case, and subscribe to @NewYorker to support this kind of investigative reporting: newyorker.com/magazine/2026/0…